Das Karriereportal für RED ZAC

Eine Case Study zur Mitarbeitergewinnung

RED ZAC: Das sind rund 180 Fachhändler mit über 200 inhabergeführten mittelständischen Fachgeschäften und Fachmärkten. Auf insgesamt 65.000 m² Verkaufsfläche bietet RED ZAC die besten Produkte in den Bereichen Unterhaltungselektronik, Mobil- und Telekommunikation, PC/Multimedia sowie Haushaltsgeräte an.

Gemeinsam mit den angeschlossenen Servicewerkstätten und Elektroinstallationsbetrieben steht RED ZAC für ein Höchstmaß an Qualität, Service, Kundennähe und Professionalität.

Seine Kunden können sich darauf verlassen, von seinen 3.000 Mitarbeitern stets optimale Lösungen bei idealem Preis-Leistungs-Verhältnis zu erhalten.

200 Geschäftsstellen

3.000 Mitarbeiter

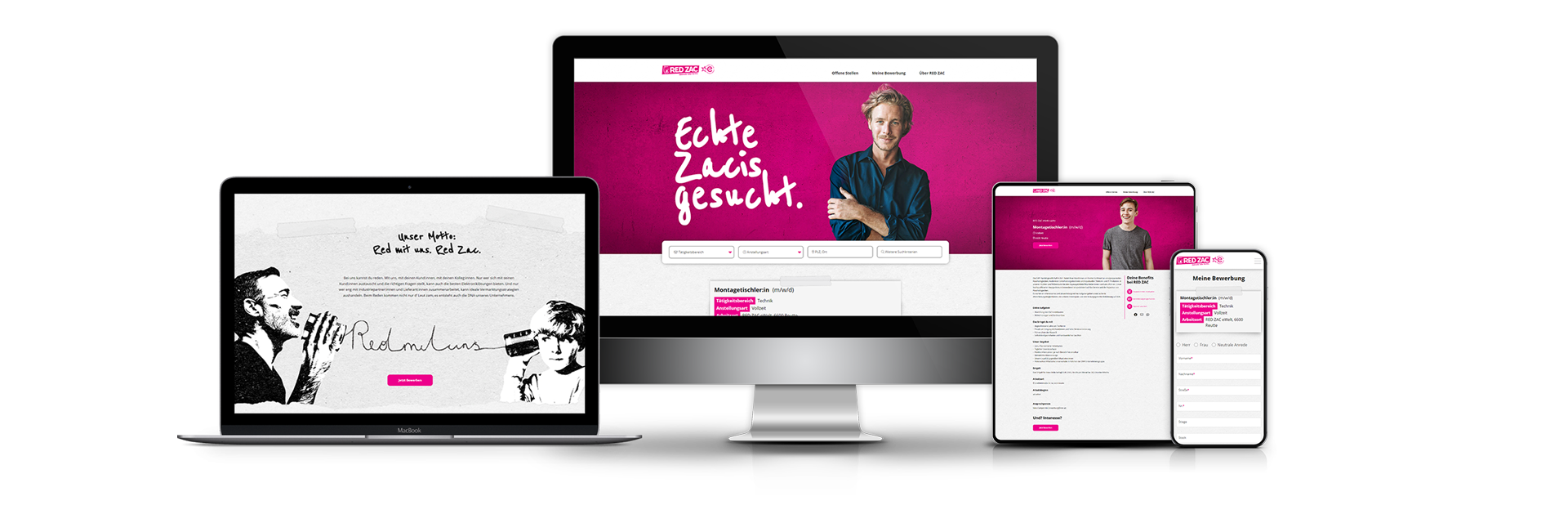

Die erste Impuls der Jobsuche beginnt im Kopf. Der zweite findet dann virtuell auf dem Smartphone statt. Diverse Studien bestätigen, dass Kandidaten vermehrt über mobile Endgeräte wie Smartphones und Tablets nach interessanten Stellen suchen. Ob im Wartezimmer beim Arzt, in öffentlichen Verkehrsmitteln oder im Bett liegend, der Erstkontakt erfolgt meist auf einem mobilen Gerät. Daher war es bei der Entwicklung wichtig, dass die Karriereplattform responsive ist, um sicherzustellen, dass potenzielle Bewerber eine positive Erfahrung haben und sich auf der Plattform wohl fühlen. Eine gute Benutzererfahrung kann dazu beitragen, Bewerber länger auf der Plattform zu halten und die Chancen erhöhen, dass sie eine Bewerbung einreichen.

Ein Karriereportal bietet Unternehmen und Arbeitgebern viele Vorteile, um Talente zu gewinnen und zu rekrutieren.

Ein Karriereportal trägt dazu bei, dass Ihr Unternehmen als Arbeitgeber bekannter wird und dadurch die Sichtbarkeit auf dem Arbeitsmarkt erhöht. Wenn potenzielle Bewerber auf der Suche nach Arbeit sind, informieren sie sich oft zunächst auf den Websites von Unternehmen über offene Stellen und Karrieremöglichkeiten.

Indem Sie offene Stellen auf Ihrem eigenen Karriereportal veröffentlichen und eine einfache Bewerbung ermöglichen, kann Ihr Unternehmen Bewerbungen von Kandidaten in einer eigenen sicheren Datenbank sammeln, verwalten und vorselektieren.

Die Verwaltung von Bewerbungen und Einstellungsprozessen wird automatisiert. Durch die Implementierung automatisierter Workflows und Prozesse kann Ihr Unternehmen Zeit sparen, indem der Bewerbungsprozess schneller und effizienter gestaltet wird.

Indem Sie Informationen über Ihr Unternehmen, Ihre Unternehmenskultur und Ihre Karrieremöglichkeiten bereitstellen, können Sie ein positives Image auf dem Arbeitsmarkt vermitteln.

Ein Karriereportal hilft Ihnen, qualifizierte Bewerber zu gewinnen. Durch klare und deutliche Kommunikation der Anforderungen offener Stellen können Sie sicherstellen, dass Bewerber mit den notwendigen Fähigkeiten und Erfahrungen auf Ihre Stellenangebote aufmerksam werden.

Ein Karriereportal ist eine wichtige Ressource für Arbeitgeber, um Talente zu rekrutieren, den Bewerbungsprozess effizienter zu gestalten und ein positives Image des Unternehmens auf dem Arbeitsmarkt zu vermitteln.

Wenn Sie an der Entwicklung eines Karriereportals für Ihr Unternehmen interessiert sind und weitere Fragen oder Anliegen haben, stehen wir Ihnen gerne zur Verfügung. Sie können uns jederzeit eine Nachricht senden, um Ihre Fragen zu stellen oder um weitere Informationen zu erhalten. Wir freuen uns darauf, von Ihnen zu hören!